Our year long investigation through 2017 reveals difficulty and complexity obtaining support from the manufacturers of mission critical devices using the faulty Intel chip.

The Story to Date

In early 2017, Intel and their OEM partners announced that the Atom C2000 processor, codenamed Rangeley, which is intended for networking devices of the SMB to Enterprise Networking market contained a bug in its silicon which affected the chip’s ability to start up correctly due to a weak clock si

gnal bus line. This problem was terminal and only rectified either with a hardware fix or a new stepping of these chips with the issue corrected in silicon.

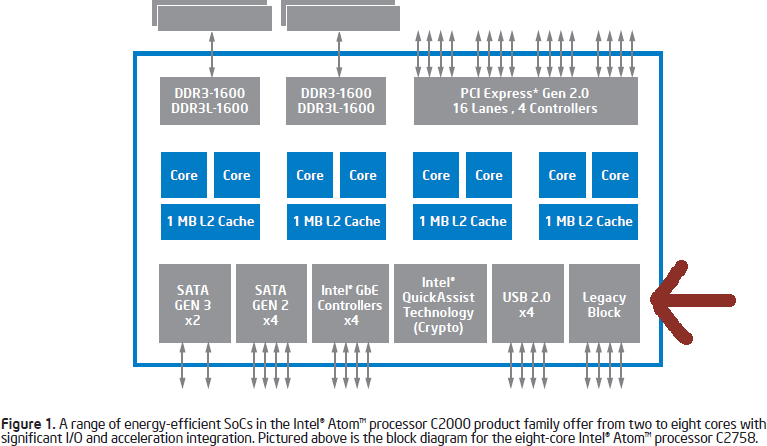

Rangeley is a system on a chip package that integrates Intel’s low power Compute Cores, platform chipset and Multiple Gigabit Networking. The typical applications are in Network Attached Storage (NAS), Networking Switches, routers and Micro servers. It was first launched in 2013-4 and since it uses the Silvermont architecture based CPU cores as used in Intel’s low power ‘Baytrail’ chips that are used in low power PCs and tablets and not Intel’s high power mainline Core I series architecture, the market segment for this chip meant it had an extremely long life-cycle. Part of the purpose of the new Rangeley Atom C series chips was to replace older embedded Intel and even AMD and non x86 chips used in previous generation products.

Intel’s remedy in early 2017 was to compensate its OEM partners for the faulty parts and warranty repairs. Each OEM instigated its own program to repair faulty customer devices and subsequently changed its manufacturing to use Intel’s newer fixed version of the processor.

Devices fail all the time and are covered by warranty and this is not the first time Intel has declared a major errata with its chipsets. Note I said declared and not a recall. Intel has not recalled product for many years as recalls have legal ramifications and frameworks in 1st world developed countries. TO the best of my knowledge, Intel’s last global recall was for its flawed 820 chipset for the Pentium III in the year 2000. Anything since has been errata notices and for significant issues affecting large numbers of chips in the field, Intel has setup a compensation fund or budget for the benefit of its OEM partners.

Past significant errata in recent years have been the 6-series platform chipset from 2011 for 2nd Generation Core Processors which had faulty SATA ports that deteriorated over time and the 8-series platform chipset from 2013 for 4th Generation Core Processors which had buggy USB 3.0 ports

No silicon chip design is perfect and almost all designs ever created have bugs known as ‘errata’, which are ‘minor’ known issues in the circuit design of the chip that are documented by the manufacturer which either will be fixed in a future version of the chip or able to be worked around with a hardware/software/firmware fix or not being able to worked around at all. I will emphasis this, errata are a natural part of the semiconductor landscape.

Electronic devices fail all the time and may be fixed under warranty, so what makes Rangeley so different this time and what’s the big deal? The answer is quite simple. The chip is used in some mission critical devices and the owners of these devices can’t afford for these devices to fail, both from an operations and a financial point of view.

Consumer devices are covered by a limited warranty and in some regions such as Australia, additional consumer protection rights apply outside of the manufacturers defined warranty period

Enterprise class devices, especially networking appliances are typically only covered by paid support contracts of varying level. Should a user need their device repaired or want access to ongoing software updates, the procedure is to keep the device under such contract mainly due to the items high cost of replacement.

This is where things got ugly in early 2017.

Rangeley is used in Cisco Security appliances such as the popular ubiquitous ASA-5506X, in Dell S-Series 10/40GbE top-of-rack open networking switches, in business NAS boxes from QNAP, Netgear, Seagate and Lacie as well as micro PCs and routers from Netgate and Fortinet and many other industrial routers and compute boards from a number of niche vendors.

When this news broke, IT news publications of repute reported on the issue and the initial set of vendors who had instigated repair and replacement programs, especially Cisco’s where the mass of faulty devices and introduction of a return program and support note informing users of the issue and to swap out their devices under support.

While the issue was headline news at the time especially from highly syndicated sites such as The Register, the issue magically ‘went away’ with the IT media not following through on the issue over time.

This is where I come in to the story and spent almost a year chasing up repair and replacement, eventually giving up.

Our experience with Rangeley and Vendor statements on the problem

When I first learnt of this issue from the tech press I was already aware I had a device with the affected processor, a pre-production 20TB NAS Pro box from Seagate dating back to 2014 and I was concerned if that thing would brick itself one day randomly leaving me in a conundrum to recover my data off the device and or get it repaired somehow. At this stage, knowing vaguely what brands were affected I was not that interested in the issue overall other than concern for my device.

At the Techleaders conference for Australian IT journalists in March 2017, I caught up with my local Seagate Representative to discuss the issue, but he had not heard about it at all and asked if I would send him some links on the matter. However he did understand the ramifications and suggested we could possibly swap my unit with another just as old review unit if mine fails, but that is not a foolproof fix and does not address the issues of any customers out there with retail Seagate/Lacie NAS devices.

That was the last I heard from Seagate on the Rangeley Clock Signal fault.

Not long after the original media blasts I noticed a mention of DELL in a news report and somehow I made the connection that their line of datacentre open network switches use this processor and due to the life-cycle of those products, those too were affected and DELL themselves eventually published a technical advisory on the matter.

This is was my oh $h!t moment. As a system administrator I have several of these high end Dell enterprise switches in network I manage, handling mission critical traffic. Although these are setup to be redundant and high availability, if a core switch fails, this represents a major outage on the network one way or another and as many admins know, falling back to a non-redundant system is risky. If any further outage happens in that state then everything is down, caught with pants down scenario?

Based on Dell’s advisory which was published around February 2017 I contacted both my Dell Sales and Tech Support point of contact for advise on how to go about replacing these switches as well as my PR contact for what Dell’s policy is for this issue beyond what is published in their advisory, which at the time was forward looking for a replacement program later in the year.

However, Dell Like other vendors involved were relatively quick to fix their devices in production, despite this family of switches having a long lead time. Switches made after March 2017 do not suffer from the fault, only ones made from 2014 to 2017.

Given I was personally exposed to two different brands with the fault and different approaches to customer remedies, I thought it would be prudent to reach out to other vendors we work with for media reviews to see how they are approaching the issue on an Australian or Global level.

I contacted my Australian PR contacts for Cisco, Netgear and Dell and received statements on the matter.

In March 2017, Dell Australia sent us the following statement

"Customer satisfaction and product quality are central to Dell’s business. A component manufactured by a supplier and included in limited Dell EMC networking products has a clock signal that can potentially degrade over time. No failures of these products have been reported to date. Beginning in July, Dell will proactively begin replacing impacted products that are under warranty or that are covered by a customer’s service contract.

You can also access some FAQs regarding this on the support site here: http://www.dell.com/support/article/us/en/19/QNA44095/networking-clock-signal-qa?lang=EN"

There are several interesting items in this comment that in retrospect were honoured and some not, more on that later.

On The 3rd of March 2017, The Register published an executive statement from Netgear assigning blame and responsibly and defining a path for repair.

http://www.theregister.co.uk/2017/03/03/netgear_recalling_hardware_with_bad_intel_atoms/

"In a statement emailed to The Register, Richard Jonker, VP of SMB product line management for Netgear, said: "Netgear is taking full responsibility for this component supplier concern and we can state at this time that we are issuing a full-scale recall for the affected products. We will be contacting all registered owners of these products and providing a swap where they will receive a new product and the affected product will be returned to Netgear."

In the formal Knowledge Base article on the issue, Netgear actually did not recall their affected devices and as mentioned in the executive statement, offering repairs for registered users, ie users with devices in warranty, where as a formal, legally binding recall is broader reaching.

Note the statement says ‘component supplier’ ominously. During the period that this story broke, February to March 2017, Intel’s name was deliberately withheld from vendor communications on the issue. Intel did not formally disclose their processors were faulty to the media or public, apart from publishing an errata document listing the technical fixes to their products.

"NETGEAR will not be providing additional comment outside of the details outlined by the KB article below.

Cisco was also identified by the initial reporting at the register, which I also followed up in March 2017 especially as Australia has strict consumer production laws on a federal and state level

https://www.cisco.com/c/en/us/support/web/clock-signal.html#~overview,

https://www.theregister.co.uk/2017/02/03/cisco_clock_component_may_fail/

All we received from Cisco ANZ was the following just as vague statement without even a link to further information. Again the ‘supplier of anonymity’ as Intel have fixed the issue themselves relatively silently and compensated their customers for repairs behind closed doors under a confidentiality umbrella.

"Cisco strives to deliver technologies and services that exceed customers’ expectations, and meet rigorous quality and customer experience standards. We became aware of an issue related to a clock signal component manufactured by one supplier. We have worked with the supplier to resolve the issue, and we’re providing information and support for our customers."

Since Intel did not actually issue a statement of their own in 2017 on the matter I did not follow up with them and focused on their OEM partners. The issue can actually be avoided altogether depending on how the OEM implemented Intel’s chip. The typical design is affected, but some more elaborate or specific designs are not.

Intel did not say much on the matter apart from their technical documentation, however Intel's Robert Holmes Swan, CFO and executive vice president, stated at the time:

"But secondly, and a little bit more significant, we were observing a product quality issue in the fourth quarter with slightly higher expected failure rates under certain use and time constraints..."

As far as my media-centric investigation, I heard nothing further from the vendors I contacted for comment either directly or from other IT news media. After the initial round of reporting Feb to march 2017 the issue was ‘old news’

Beyond statements and promises – our attempts at getting our Rangeley powered devices replaced

Our real world adventure to get Rangeley powered devices repaired/replaced focuses on Dell Datacentre grade switches which were purchased over the time frame of two to three years.

The Seagate NAS with the Rangeley Atom CPU mentioned is not covered under Seagate’s retail warranty.

Let’s look back as a reminder of what Dell have stated in March 2017 in their official KB note of their repair program and break down their statement..

http://www.dell.com/support/article/au/en/aubsd1/qna44095/networking-clock-signal-qa?lang=en

“Beginning in July, Dell will proactively begin replacing impacted products that are under warranty or that are covered by a customer’s service contract.”

This fragment forms the beginning of the long journey. Our efforts to get at least our oldest Dell Switch replaced started in March 2017 and went through to December 2017. During this almost year, we achieved no progress at all on replacing any of our Dell Switches affected by this issue. It is purely a waiting game.

“Although the Dell EMC products with these components are currently performing normally and no failures have been reported to date (as of Aug 1, 2017), some product failures may occur over the years, beginning after the unit has been in operation for approximately 18 months.”

“Q: When does the 18 month windows for increased failure rate begin and what is the expected failure rate?

A: The 18 month timeframe for beginning to see increased failure rate is determined based off total system power-on runtime, not manufacturing or ship date. While Dell expects the failure rate to begin to increase at the 18 month mark, the anticipated failure rate for the first two years of operation is expected to be under extremely low.”

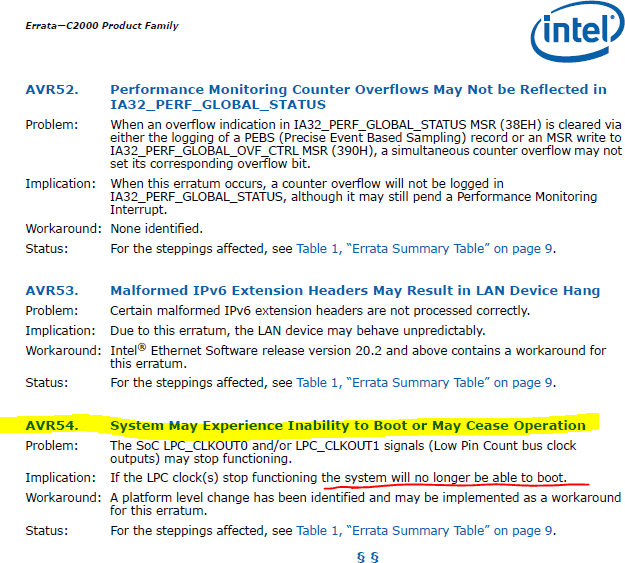

The metal layers that make up the silicon die for the Rangeley chip degrade over the stated 18 month period, after which time the electrical integrity of the LPC (low pin count) bus, a bus which connects the chipset to flash memory containing the firmware is no longer reliable and is poor. Since the system needs a stable clock signal to talk to its companion chips, the system fails to boot after a power outage or power cycle.

“Q: Do I need to contact Dell to get replacement product or be issued a Return Merchandise Authorization (RMA)?

A: No you do not need to proactively contact Dell to get replacement product. Dell will be proactively contacting all customers starting in the July-August 2017 timeframe to arrange for replacement product.”

This class of Dell networking are sold on a b-2-b basis in some regions such as Australia. From this interaction, Dell has a unique record of who has purchased the device and is able to contact these customers for after sales service without the concern of warranty card registration, a concept depended on in the Americas but not in other countries such as Australia.

Dell’s intention was to bring up enough buffer stock to cover this return program, which would not necessarily available on a typical weekly basis. The several month timeframe can be a typical period in the electronics industry to build up inventory.

“Q: What if my switch is no longer under warranty?

A: To be eligible for the Proactive Replacement Program, customers must have had a valid warranty contract as of 2/7/2017 or later. Customers with effected products that have a warranty expired prior to 2/7/2017 may choose to purchase a service contract to have affected products replaced.”

If your warranty has expired after Feb 2017 and your device dies, despite the ’18 month expected lifetime’ that has been described, you will not be able to have the device repaired for free and a valid paid service contract is required. The repair program might as well not exist as no special handling is offered for the issue, Dell (and the other OEMs affected) just formally ‘recognise’ their devices are faulty.

“Q: What if my switch is affected (was under warranty on Feb 7, 2017), but is no longer under warranty or service contract, and I experience a failure?

A: In this case the unit will be replaced as the program priority comes up. The replacement timing has no NBD (or other) timeline guarantees (in cases where there is no existing Support contract). To guarantee that units are replaced within the use case requirements, please keep the support contract current to ensure timely replacements.”

If you do not have a support contract, the repair program enables eligibility for replacement but only at the lowest priority compared to customers with a replacement program. ‘use case requirements’ will be whatever Dell want it to mean, in that a return request must meet their criteria and that a working switch that has not yet failed, despite ’18 months life expectancy’ will be rejected, as was in my instance.

“Early warning detection can be enabled via a new patch release for the following affected models (S3048-ON, S6100-ON, Z9100-ON and C9010 platforms). This patch is available via dell.com/support.”

Since the design Dell and their design/manufacturing partners does not allow for a fix via a BIOS update, a countdown timer for the ‘ticking time bomb’ was added, however at time of publishing I cannot say if this is a timer or simply a flag that is displayed once particular conditions are met such as device lifetime, or whether some code is actually monitoring the performance of the clock signals for each domain in the chipset. The user can’t RMA ad-hoc, so all the feature does is tell them when to be ready with a replacement device. The patch reads as ‘we have to do something, lets ad an alarm or count down timer’ In fact there is also the possibility this early warning system may be a false positive, devices can still fail. It is the nature of the silicon die itself.

In summary, we can’t send these devices away to be fixed on our own time and must wait for the signal from Dell for when they are ready to exchange.

In addition, they are running the program to replace the oldest manufactured devices first, regardless of any existing failures.

Devices must be under warranty as of February 2017 to be eligible for the repair program. If the warranty has since expired, then those devices will be the lowest priority ones to be replaced under the program which has no expiry date.

The replacement program is also dependant on regional stock. These switches are high end items and not typically kept in large amounts and are sometimes built to order when stocks are low.

These switches were expensive and for that we can't send them away to get fixed!

My understanding of the Cisco side of the problem was they enabled and allowed RMAs en masse of affected devices regardless of their state, provided there was a current service contract

I have described the initial global communication from Dell, both to the public via their website as well as a media specific comment. This messaging as well as direct communication to Dell tech support indicated what Dell had said already, to wait for the July-August timeframe onwards for more information and replacement

Having received this update on the matter, and not hearing anything else from other vendors I contacted or even user feedback. I waited from March to July/August for progression.

July/August came and went with the same message relayed back to us except with the timing updated to Quarter 4 2017. A reason given was build-up of stock, as I have previously described.

There was not much else I could do other than wait and try again.

Does our story end in Quarter 4 2017? Nope.

Very late November Dell Tech Support advised the timeframe was December. That itself is ok, but Quarter 4 to December is a little more dubious. Again I break down the commentary:

“I have received update from our Proactive Field Replacement team, they will start contact customer by regional team in December. The team will work with each affected customer to replace any affected Dell EMC network product. As so far the exact timeline for a specific customer contact is difficult to predict.”

Issue was first disclosed in March 2017, then remedy delayed to July, August, November and December.

Timelines shouldn’t be difficult to predict. One would hope a manufacturer is able to plan their manufacturing and inventory to the day, especially since many use Just-In-Time Logistics methods. One would think all customers regardless of the manufacturer are treated equal, but we words like “specific customer” and each customer.

“Feedback from our product team, even though we have customer with over units running in excess of 2 years, we have observed no failures. In our labs where we have much older units without appeared the issue. Failures at 24 months is a mere 0.6%. There is no epic failure imminent, and the issue may not be observed until a reboot or power cycle occurs.”

0.6% of 1 Mil units (my ball park guesstimate) is 6000 units at one particular moment in time. Given this issue is deterioration and aging, common sense says this number will increase over time. Besides often a statement from a corporate can be taken with a grain of salt, any figure of this nature often has many if’s and but’s. In the electronics and PC hardware industry, up to 10% as a normal failure rate is a figure I have heard often.

The statement of “epic failure imminent” contradicts the later ‘issue observed until power cycle’, Well you’ll be sure it’s #epicfail when your device no longer switches on! That’s my expert opinion anyway, as someone who has worked in the electronics assembly/repair and IT administration, repair sectors.

“Furthermore, in case of any unit failure occur, our ProSupport team here can help to troubleshooting and RMA issue hardware with your warranty entitlement.”

I tried in good faith to RMA our hardware under our warranty entitlement and it was knocked back as the device was not dead, despite its age and the announced replacement program combined with the mention that little to no failures were observed.

The ‘rush’ on my end to exchange the device is from a planning and administrative point of view I have made physical accommodations to easily remove the old device. Those who work in data centres will be aware of difficulty to remove certain devices plus naturally it is preferential to the operation of a large network to pre-emptively replace something before it’s too late.

As you would be reading this story in February to March 2018 there has been no further updates on the matter since December 2017, a full year has passed. This is significant given the 18 months life expectancy of the chipset and length of warranties/support contracts offered by vendors.

There is the risk that the situation may change once this story goes to press…

A technical re-cap on the Intel Atom clock signal issue

Under heavy use, the signal on one of the buses degrades faster than normal and between 18 months and 3 years, the CPU will no longer boot, especially after a power outage/cycle.

Failure prevention is not to hard reboot/power cycle an aging device excessively.

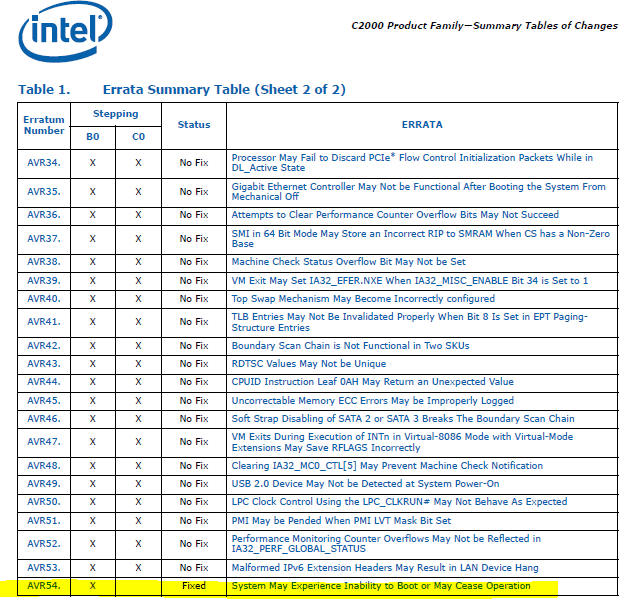

Intel documented this issue as their errata AVR50 and later AVR54, which is listed in their January 2017 specification update document for their Atom Processor C2000 Family. A workaround is available but the issue was permanently fixed in a new revision of the chipset. Intel maintain these documents for all their products, continuously listing new erratum and their fixes.

Do these failure scenarios sound like “Epic failures”? Yes they do.

The ‘legacy block’ includes buses such as GPIO and LPC. General Purpose I/O lines are used in system-on-chip designs to interface with buttons, switches and displays that will trigger events in the processor such as standby, reset or wireless.

For example, the LEDs and wireless buttons on a typical router are enabled this way and development boards for makers and hobbyists expose many of these.

Low Pin Count is a 33 MHz bus line used to connect the Intel Platform chipset to auxiliaries such as The Flash ROM that contains the System BIOS/Firmware and controller chips that may can legacy connectivity such as Serial and Parallel Ports, Floppy Disk and PS/2 Keyboard, mouse. USB is natively available in the system chipset and is completely separate.

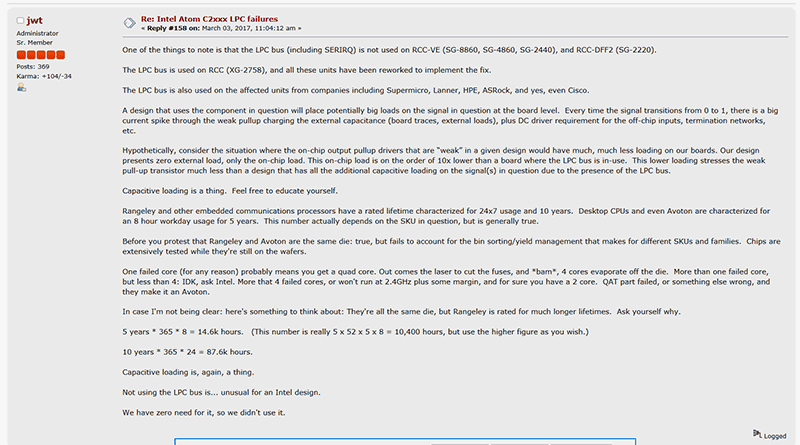

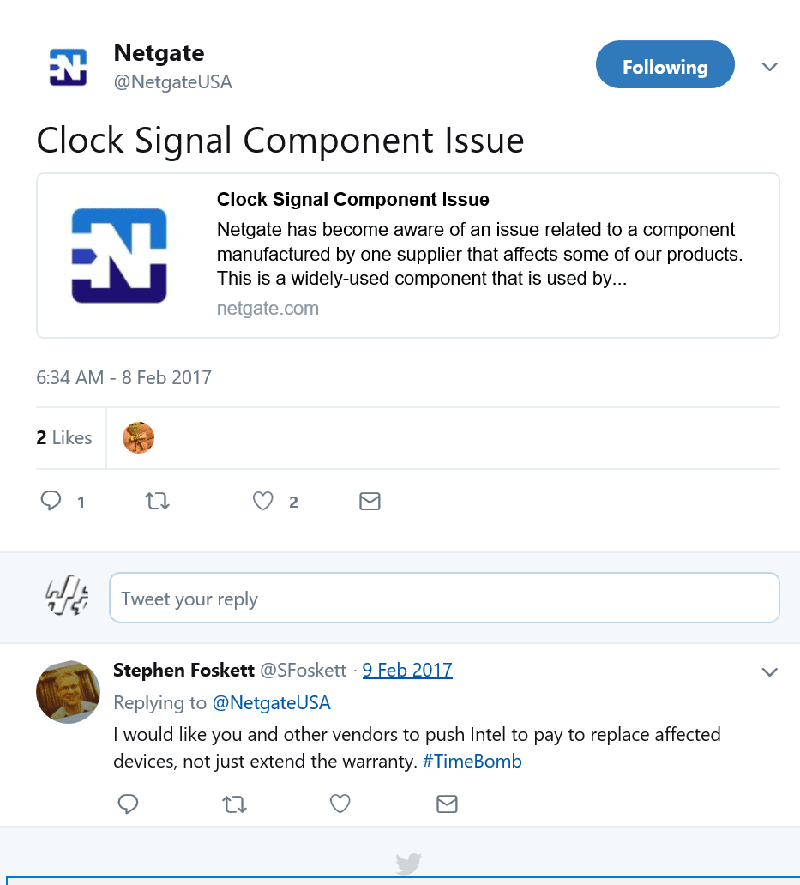

Netgate (not to be confused with Netgear) is a semi niche networking vendor who produces networking devices such as firewalls that run the popular BSD based pfSense operating system. Some of their devices used the Atom C2000 chip but they chose to implement it their own way using n open source firmware approach and as such avoided the trap of the faulty LPC bus, that would otherwise connect a serial Flash EEPROM that would hold the BIOS code.

https://www.netgate.com/blog/clock-signal-component-issue.html

To quote an administrator on the pfSense forums

“Not using the LPC bus is…unusual for an Intel design. We have zero need for it so we didn’t use it”

https://forum.pfsense.org/index.php?topic=125105.msg699015#msg699015

Using information gathered from Netgate, ADI (ODM board manufacturer of embedded and industrial PC motherboards for vendors like Netgate) and Intel documents, The workaround for errata AVR 50 and 54 seems to involves BIOS updates to modify how the serial communication and interrupts behave on the LPC bus, specific to the particular board in the affected device. Additionally, a hardware rework on those affected devices reduces the electrical load/strength of the signals on the LPC bus and therefore reduces stress on the part.

Not all devices in the field have the ability to have their system BIOS updated. Just because a vendor may offer a firmware or OS update for say a black box firewall, router or switch, that update is typically for the main software operating system. The operating system would need access to the system bios in order to flash, or update it. This functionality is often deliberately reserved for factory use only in order to prevent faults and preserve ‘security’ plus theres the engineering work required by the vendor to ensure that mechanism works. A manufacturer may wish to not implement it for a function that may never be used in the life of the product as they forsee their BIOS implementation as stripped down and basic, which ‘does not need’ to be ever updated…

I need to be clear though, due to the silicon lottery and the sometimes obscure nature of the errata that occur in chips. Just because you own an affected chip does not mean it will fail, it’s more that it is very likely to fail. Vendors tend to claim otherwise but stacks of faulty product that appear some time, often years after the fact with the same issues is more evidence of the truth.

Vendors will also tout industry standard or sub industry standard failure rates but there is no objective way to verify those specific claims. Those within the industry with a exposure to multiple examples of a device such as administrators or repairs would have a better grasp of the status quo regarding a faulty device.

If those numbers are so low, then what are the odds that outside the clock signal issue as a thing, that one of the switches I administer has a fault with its flash memory card? Funnily enough this issue can be related to the clock signal bug as storage can be connected via the affected bus lines.

Mitigation and solving the problem for good

If this issue affected consumer products, such as the faulty 6-series chipset for Intel 2nd Gen Core CPU, the dozens of known issues to apple mac books, macs and iPhone such as bad solder on GPUs, faulty FireWire ports, ‘bendgate’ on iPhone 6 Plus’s and a multitude of other common faults such as bad capacitors to all brands, an end user would simply be inconvenienced during the process of having the device repaired or replaced, and ignoring the financial implications a replacement is easily sourced.

For business/enterprise gear especially ones that run services third party customers, things get a bit more complicated as there are service level agreements in place that make a provider liable for outages to customer services, as well as any loss to reputation or damage to equipment.

SLA’s are often defined as a high 90s percentage, examples are:

- 99.9% uptime means a 43 minute outage per month.

- 99.99% uptime means 4.3 minutes outage per month.

Some providers advertise 100% uptime which obviously means 0 minutes outage per month. This is impossible regardless of high availability technology or provider. Third party services such as power often go out or become instable more often external to a Datacentre, international telecommunication lines fail such as in a storm or if a ship damages a undersea line or commonly enough contractors damage cables while doing construction or digging. In extreme cases, acts of god and natural disasters such as floods or hurricanes/cyclones can take out entire facilities.

No cloud or network infrastructure provider can fully mitigate against all these issues. The best possible is to ensure redundancy on a service and geographic level, so if a particular service is lost in one location such as a router, power or data line, other resources in another geography can be switched in.

Intel’s ‘Rangeley’ based Atom chips are and were used in hardware installed in such critical locations and sites so loss of functionality becomes paramount.

Users of such equipment need to plan for and accommodate not only the possible future failure of any of these devices, but also if a device fails how it will be replaced and what the time frame is to replace it.

The core definition of the relationship between a paying customer and business of high repute should be the ease of obtaining support and service from that vendor, especially when there is a declared known issue relating to that device.

I can understand a tier 1 OEM like Dell need to manage their inventory and production levels so they can balance existing orders as well as buffer stock for repair programs, but given the price of these devices (>$10K AUD before options) a user should be able to request repair/replacement for affected devices whenever they please or need.

For an ordinary fault, the customers paid for high priority on-site warranty will cover any spot failures within a few hours but dell are refusing to allow any un-dead switches to be exchanged under this paid warranty program, again, we must wait for the bat signal from Dell.

By then it will be too late, the damage has been done.

Dell’s intermediate fix for the issue is to add “early warning detection” of imminent failure via a patch to their switch operating system. Vendors typically do not add telemetry to their systems and devices indicating a future fault. Often devices detect a fault after the fact and provide a remedy, or perform scheduled maintenance. If a company like Apple added a readout to iPhone stating ‘your phone is dying’, the zombie horde would grab their torches and pitchforks in revolt!

For administrators the only sure solution is to purchase a new switch at own-cost, schedule an outage, swap out the old switch for the new one and store the old, to be faulty switch for future replacement. This requires capital investment which some may not be able or willing to commit to.

In our case, as many of our switches have reached or exceeded the 18 month mark, they are in the danger zone. IF there is an interruption in power it is possible the switch may not come back after the outage, leaving the network in a state of disarray /suboptimal state. The fact they are heavy operated 24x7 only further degrades the product. Something which could have been avoided if Dell had simply allowed blanket replacement of devices without any assigned priority.

It is unreasonable for a purchaser of a good to replace it at their own expense when the vendor has admitted and disclosed to the public that the item has known issues or faults.

Again to use Apple as an analogue, when a repair program for an iPhone or Mac is announced, no devices are prioritised /by age, and all devices falling within a specific series or time frame are equally eligible for repair regardless of age or mileage.

What should vendors do about the problem then, or what should they do different? All manufacturers had to do was allow end users to RMA their devices on an ad-hoc basis. Since production was fixed in February, the number of possible affected units in the field is limited and users should not expect a fast turnaround

All manufacturers concerned should also replace customer devices out of warranty. An active warranty plan should not be required to repair devices with declared issues.

Intel should also help end users who cannot get service for their device.

Wrap Up

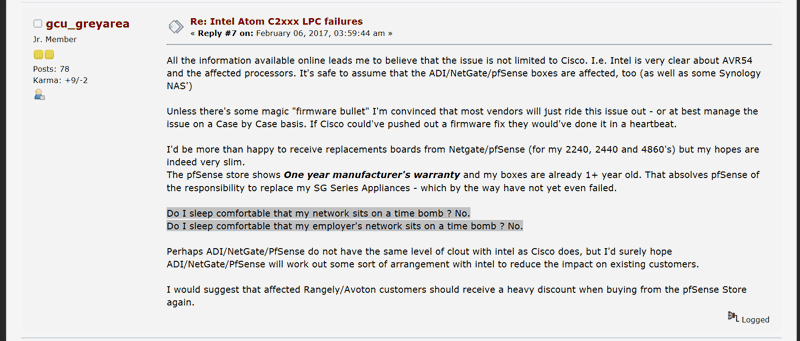

The title of this story includes ‘time bomb’ which may sound startling and is surely not appreciated by vendors, but the term is technically correct. The chip may fail at any time in the future, which is the definition of a ‘time bomb’. It is not just me saying this, this is the sentiment of the end users affected by this issue, as shown by two examples

https://forum.pfsense.org/index.php?topic=125105.0

“Do I sleep comfortable that my network sits on a time bomb ? No.

Do I sleep comfortable that my employer's network sits on a time bomb ? No”

“I would like you and other vendors to push Intel to pay to replace affected devices, not just extend the warranty. #TimeBomb”

Intel remained quiet on the issue, placing its partners under confidentalility agreements to not mention their name in exchange for compensation for repairs and bad stock.

Each on their own, affected vendors ranged from Dell,QNAP, Segate/Lacie, Netgear, Netgate, ADI, Cisco, Fortinet and others all issued pretty much carbon copy public statements in early 2017 on the Atom Clock Signal issue, that they would repair users bad hardware as long as it was under warranty or within a several year period.

Some of these manufacturers have already replaced or repaired hardware such as Cisco and Netage. Seagate has done nothing public facing and Dell made promises but kept delaying repairs throughout the entire calendar year of 2017 and at least from what I have personally experienced, I do not know if or when a resolution is coming at least for my affected data-center grade equipment.

Disclosure

Seagate NAS Pro provided for long term review from Seagate Australia

Vendors citied in this article were not appraised of this article before publication.

The writer is a consulting hardware and systems engineer to RackCorp.com. Dell hardware mentioned were purchased under standard business terms without any media discount.