AMD's Radeon R9 290X video card may be a watershed moment in PC graphics, Powered by the 'Hawaii' GPU based on an beefed up version of the Graphics Core Next architecture, this is the first card 'designed for gaming at 4K resolution'.

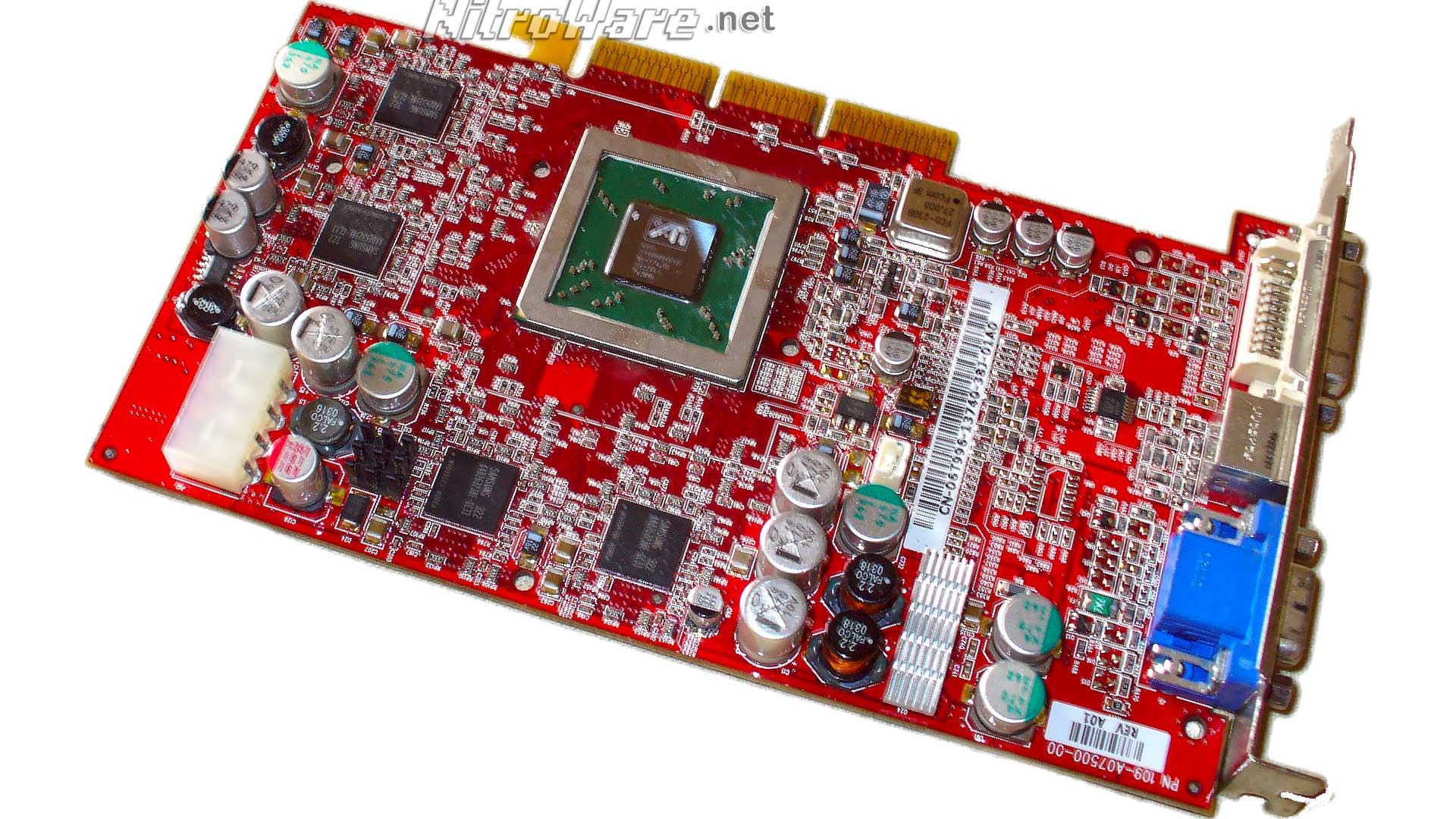

We analyse the AMD Radeon 290X Hawaii GPU's design and specs, compare AMD and NVIDIA's current GPU and remember Graphics Cores Past. Ten years ago, the for the time powerful R350 based ATI Radeon 9800 Pro launched, 64-bit computing became mainstream and USB 2.0 as well as Wi-Fi became ubiquitous.

The 290X is the latest in what has been a successful line of single GPU high end DirectX 11 Radeon parts from ATI/AMD: HD 5870, HD 6970 and HD 7970. Read on to see if Hawaii and R9 290X continues this legacy.

Background

G

PU vendors tend to take to a tick-tock like approach to releasing new graphics cards and GPU architectures.An all-new architecture is released and over the next two years this architecture will be enhanced, updated and refined as it matures whereas CPU vendors such as Intel have a shorter cadence of 12months between cycles.

Let us look at NVIDIA's 2010 release of their 'Fermi' architecture, in mobile guise these parts lasted through 2012 and early 13 until the entire product stack was replaced with 700 series Kepler Refresh parts.

At the very end of 2011, AMD introduced its Graphics Core Next architecture that it intended for to replace several older architectures. The point of this technology was for the company to have a single, very scalable Graphics Engine that could be used in low and high performance solutions easily, but also goes the distance lasting several years. At some time in the future this architecture could be updated to meet needs of the time rather than designing a new system from scratch.

As Radeon HD 7000 is now nearing the end of product cycle, for 2013-2014 AMD is announcing a new top to bottom stack of GPUs. Some of these are built using a new GPU; others are boosted versions of the 2012-13 GCN products.

There has been some negative response to this rebranding by enthusiasts, however this *nothing* new. Both AMD and NVIDIA have been doing this for the past ten years, ever since NVIDIA released their NV10 'GeForce' and AMD their R100 'Radeon'. There is simply no reason to design a clean sheet GPU when the current solution is more than adequate in features and whose performance can be improved as chips gain maturity, unlike older GPUs where performance was limited by bottlenecks in the chips logic blocks or memory bandwidth.

For 2014 AMD have updated and enhanced Graphics Core Next, 'fixed its plumbing', scaling it up, making it better faster wider and stronger.

All the lessons learnt by AMD since quarter 4 of 2011 and chip tape out have been applied to Hawaii's enhanced GCN design. These bug fixes and enhancements improve the end-user experience of AMD Radeon.

No microprocessor is perfect. Just as soon as a design is locked down, work is begun on updates to the chip. The same methodology is used for the industry stand software development life cycle. The line has to be drawn somewhere, sometime and not all features or bug fixes get to make it into a product release. These will be held for the next release.

It has now been ten years since one of ATI's previous watershed moments in graphics, the ultra-successful R300 based Radeon 9800 series with a for the time very wide 256-bit memory bus. In 2013 we are looking at another watershed moment with the 'optimised for 4K' Hawaii based 290X cards but competition is fiercer than it was back then. The R300 was one of the first cards to have an optional 256MB frame buffer, Now 290X offers 4GB standard and AMD claims it needs it.